How we tackled texturing for a large open world in Conan Exiles in a very short amount of time

In this blog post we are doing something a bit different. Our tech team will offer some insights into how we tackled texturing issues alongside Graphine in order to make sure we managed to run the game on a wider array of graphics hardware while still maintaining visual quality. While this is primarily a post targeted at other developers, it might be interesting to a wider audience to get a glimpse into the development process and challenges.

In Conan Exiles we use a large number of high resolution textures (4K, some 2K, even a couple 8K ones). We have a pretty large open world, and numerous items, placeables, building pieces and characters, which all adds up to a significant amount of memory. Disk space is not a very critical resource, but VRAM, or shared memory on consoles being in very short supply, we knew early on we were going to have issues with getting this to fit in memory. As expected, a few months from our early access release we ended up with blurry textures on machines with 2GB of VRAM, and we would either fail allocation of GPU resources entirely on 1GB VRAM machines, or suffer from massive stalls as memory was swapped in and out of system memory. Even on 4GB VRAM setups we would still notice the odd blurry texture.

Above: [Blurry textures on 2GB VRAM machines]

Our options were:

- use much lower resolution textures (through aggressive higher mip level streaming), using the built in Unreal Engine system of specifying texture groups with different streaming rules. This would have resulted in much lower visual quality, and been relatively time consuming to setup in the best way.

- Ensure that distinct parts of the game world had a budget for unique textures which are only visible in these areas, in addition to common texture pools. This would have allowed us to stream in and out entire texture sets based on player position, and reduce the need for continuously streaming things in and out. This approach however would have required significant authoring work (on top of the code work), so was not a viable at that point in production. Setting up and sticking to memory budgets is difficult when available upfront, but almost impossible late in production.

- Granite (a middleware), which in theory would be able to serve as a complete solution for texture streaming and compression. (Very) roughly speaking, Granite is a virtual texturing solution, which stores all the texture data it handles in its own chunked data format (offline). At runtime, it will add one more rendertarget to your gbuffer set, storing exact coverage information for various textures. It then uses this information to determine what needs to be streamed in and out, allowing you to end up with pretty much perfect pixel density for the active resolution for close to the minimum required texture pool size. We will primarily focus in this post on our integration of it and the workflow we ended up using for it, but you can find more detailed information on it here: http://graphinesoftware.com/products/granite-sdk

On paper, Granite sounded like exactly what we needed, not only because it would allow us to get better visual quality on lower end PCs, but it would also allow us to limit our texture pool size significantly, which on consoles where you only have around 5Gb of shared system and video memory at your disposal is extremely valuable. In practice, there was a major blocker: the majority of our textures and materials were already created and setup in Unreal, and Granite required data to be setup in a different way, through their tool, which would convert the textures into their steaming tiled format.

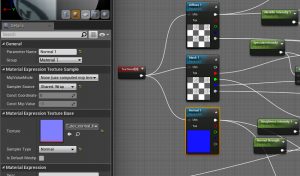

To resolve this issue the Graphine engineers accelerated the development of a new import workflow into Unreal which uses material graph nodes in materials / master materials to find and convert into their format all relevant source textures. This made it possible for us to get it integrated and working with only limited asset work.

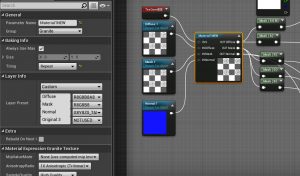

The new process involved replacing in our Unreal materials the regular texture sampling nodes with these new Granite texture streaming nodes, which take as input the same source textures as the original sampling nodes. This does not make the material use Granite immediately (there is no generated Granite data yet), but allows the material to be “baked”, which in turn will make the shader use the Granite VT sampling code, as well convert the relevant textures to the Granite format.

The Granite streaming nodes can take multiple textures as input (up to 4) as long as they use the same UVs. During the bake process, each of these texture stacks (or Granite Nodes) are tiled and the tiles are compressed, before being stored an intermediate file (GTEX), which can be cached to accelerate future bakes of these textures. This data is then used to generate paged files (GTP) which contain a number of nodes. The size and setup of these pages is chosen to get most optimal streaming performance.

The bake process also modifies the material UAssets to use the Granite VT data that was generated.

Luckily for us, given our material instance count, the system supports editing only the master materials, and baking all instances in one operation. There are however a couple of caveats:

- This is a (very) time consuming operation for large datasets. On Exiles it can take 5+ hours.

- The granite node we used was based on a granite baking node they were working on, which allows you to bake parts of your material graph into a texture. The downside of this is that changing the graph or shader parameters invalidates the bake, meaning whenever artists work on different materials they are effectively reverting the material to use non-granite textures.

- At the time of our integration, the generated granite data was batched together into large files, which were tied to the granite node setup, rather than the individual textures or materials. This was the case to increase streaming performance, but it also led to any single material change invalidating the bakes for potentially hundreds or thousands of materials which shared Granite nodes with identical setups. Due to this form of batching, one would want to avoid submitting to source control the final generated granite files, as they will often be invalidated, take up lots of disk space, and most of all are only complete when all materials are baked. If an artist would bake just one material, and submitted the generated GTS/GTP files, they would now only contain the data for that specific material. (This is no longer the case for our project; generated Granite page files are now linked to individual nodes, rather than node sets. This leads to less likely bake data invalidation by artists, and thus smaller patches and faster subsequent bakes, at the cost of some streaming performance)

At this point we need to go over very briefly our process for making versions of our game:

Source code for our game is stored in a Perforce server. Data is stored in a custom source control system we call “dataset system”.

We have a build system comprised of bunch of machines which will on demand compile our game, run an Unreal cook of the data, sign the binaries (and a few other steps) before submitting the resulting packaged game to perforce and labelling it with the version number. We then have some scripts which will fetch this and push it to steam for testing and eventual release.

The original idea for granite was to have the artists bake the materials they edit, and submit to source control the intermediate GTEX files if changed, but not the actual bake result data. The build system would then when packaging a version fetch those GTEX files, and bake all assets, using the resulting granite data when cooking the content. The hope was that the GTEX intermediate files would make the bake process fast enough to be able to run it every time we publish a version.

The original idea for granite was to have the artists bake the materials they edit, and submit to source control the intermediate GTEX files if changed, but not the actual bake result data. The build system would then when packaging a version fetch those GTEX files, and bake all assets, using the resulting granite data when cooking the content. The hope was that the GTEX intermediate files would make the bake process fast enough to be able to run it every time we publish a version.

Unfortunately, this did not work: artists did not want to deal with baking materials locally, as it could take minutes and was an error prone process. On top of that the Bake All process was still much too slow, even with the GTEX files available to be run for every publish.

Our main goal was to not add any overhead for the art team, and to not add any significant time increase to our build process. To achieve that, after some back and forth we came up with the following workflow:

- Our 2 technical artists on the project would be responsible of updating the master materials to use the Granite streaming nodes. The rest of the art team would largely ignore Granite.

- We setup a build machine to generate a bake on a regular basis (a couple times a week). This is done at night to avoid contention with exclusively locked material UAsset files artists might be working on during the day.

- This machine does not submit any intermediate files, but will keep them locally to speed up subsequent bakes. If ever they are deleted for some reason, the next bake will just be slower. The resulting granite data is submitted to our data source control system.

- Our build system will simply sync all content as usual, and get a mostly up to date set of granite data this way. If ever an artist has touched a material since the last full bake, that material will not use granite. In practice this is not a problem though as main development happens in different branches/datasets than the stabler ones we make release candidate versions from.

Figuring out the best way to handle this took a couple of months for one of our engineers and our tech artists, but it allowed us to relatively transparently to the bulk of the development team integrate Granite extremely late in development and solve our issues with texturing. There is of course a lot more to cover on the topic, especially regarding how we updated our materials to get the best balance of visual fidelity, performance, and memory usage. We might try to cover those in a subsequent post. In the meantime, if you have any questions feel free to ask!